AI Jargon Decoded for Business Leaders: The Foundational Terms

AI Jargon Decoded for Business Leaders: The Foundational Terms

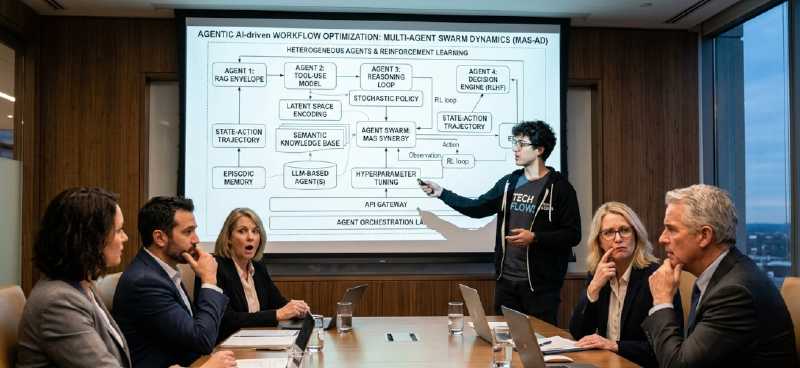

The Jargon Barrier to Strategic AI Adoption

You’ve read the reports, sat through the boardroom presentations, and seen the headlines: Artificial Intelligence is no longer a futuristic concept, it’s a present-day strategic imperative. The potential for transformative efficiency, insight, and competitive edge is undeniable. Yet, for many seasoned leaders, the journey from recognizing AI’s potential to approving a concrete implementation plan hits an unexpected roadblock long before the budget is discussed.

That roadblock isn’t a lack of vision or resources. It’s language. Jargon.

When AI expert burries you under terms like “fine-tuning a foundational model,” “mitigating hallucinations with RAG,” or “orchestrating autonomous agents,” it can feel less like strategic planning and more like deciphering an obscure technical manual. This dense terminology creates a fog of uncertainty. It obscures the tangible business value, muddies the assessment of risks and ROI, and ultimately, paralyzes decision-making. How can you confidently invest in and champion a solution you don’t fully speak the language of?

You are not alone. This experience is the norm, not the exception, for executives navigating this new landscape.

This article series, AI Jargon Decoded for Business Leaders, is designed to cut through that fog. Our mission is to empower you with clarity. We will systematically translate the most pervasive and confusing AI jargon into clear, actionable business concepts. This isn’t about turning you into an AI expert; it’s about equipping you with the foundational understanding needed to ask the right questions, evaluate vendor claims critically, and make informed strategic choices.

By the end of this first installment, you will understand core terms like AI Models, Tokens, RAG, and AI Agents not as abstract tech buzzwords, but as fundamental levers for transformation, quality, and security within your organization. This knowledge is the first, crucial step in moving from passive interest to empowered leadership in your company’s AI journey.

The Core Engine: AI Models – More Than Just “AI”

When a team says, “We need to implement AI,” the first and most critical question you should ask is: “What kind?” The term “AI” is a vast umbrella. The specific power, cost, and applicability of any solution lie in the AI Model at its heart.

Jargon Decoded: AI Models (aka Foundation Models)

Think of an AI model not as a monolithic “artificial intelligence,” but as a highly specialized engine. Each model is a complex mathematical system trained on enormous datasets to recognize patterns and perform specific types of tasks. The current revolution is powered primarily by Large Language Models (LLMs), a type of model trained on text to understand, generate, and manipulate human language. These general-purpose LLM (like GPT, Deepseek, Llama, or Claude) serves as a versatile starting point when dealing with text information.

There are many more options available on the market, for image, voice, music, video. Each model has unique capabilities, and one cannot replace the others. There is, unfortunately, no AI swiss knife that can cover every possible use case.

Business Translation: The Right Tool for the Right Job

You wouldn’t use a Formula 1 engine to power a bicycle. Similarly, different AI models have different strengths:

- Some are fast and can accelerate the most simple execution tasks: we call them Text Models.

- Others excel at logical reasoning, code generation, or structured data analysis: they are Reasoning Models.

- None of these models can add more than 10 two-digit numbers without mistake.

- And you need a different model to generate images.

Fuel & Cost: Tokens – The Currency of AI

Once you’ve selected the right AI “engine,” you need to understand what makes it run. In the world of generative AI, that fuel is measured in Tokens. This concept is critical because it directly translates to both performance and, most tangibly for business leaders, cost.

Jargon Decoded: Tokens

A token is the basic unit of processing for an AI model. It’s not always a full word. Common words are single tokens, but longer words can be broken into multiple tokens, and punctuation counts. For example, the sentence “AI integration is strategic!” might be broken into tokens like: [“AI”, " integ", “ration”, " is", " strategic", “!”].

Every interaction with an AI model consumes tokens in two ways:

- Input (Prompt) Tokens: The text, data, or instructions you provide.

- Output (Result) Tokens: The text or code the model generates in response.

Business Translation: The Direct Link to Your Budget

Think of tokens as the “compute currency” or “digital fuel.” private corporate AI are typically charged based on token consumption. Therefore:

- A long, detailed report you ask the AI to summarize consumes many input tokens.

- The concise summary it generates consumes output tokens.

- A simple query with a one-sentence answer is inexpensive. A complex analysis of a 100-page document with a detailed recommendation memo is a significant, measurable cost.

Key Insight for Leaders

Token awareness is operational and financial intelligence. It allows you to:

- Budget Accurately: Move from vague “AI project” budgets to forecasts based on expected usage volume and complexity.

- Design for Efficiency: Structure processes and prompts to be concise and effective, maximizing value per token spent. This is a core skill we teach.

- Evaluate Vendor Proposals: Understand the cost drivers behind different AI service models.

Knowledge & Hallucinations: RAG = Knowledge Management

You have the right engine (model) and understand its fuel (tokens). Now, you face the most significant business risk in generative AI adoption: inaccuracy. An AI confidently presenting fabricated information—known as a “hallucination”, can derail decisions, damage credibility, and create legal or compliance exposure. One strategic solution to this problem is a technology called RAG (Retrieval-Augmented Generation).

Jargon Decoded: Hallucinations & RAG

- Hallucination: This occurs when an AI model generates convincing but incorrect, nonsensical, or entirely fabricated information. It is not lying; it is statistically generating a likely-sounding response that isn’t grounded in verified facts. This is a fundamental limitation of models trained on broad, public data. No AI model is free from hallucinations.

- RAG (Retrieval-Augmented Generation): This is the architectural solution. Before generating a final answer, a RAG system first retrieves relevant information from a designated, curated, and trusted knowledge base. It then augments the AI’s prompt with this verified data, grounding the generation of the answer in your specific truth.

Business Translation: From Generic Chatbot to Company Expert

Think of it this way: A standard AI model is a brilliant, generalist consultant who has read the entire internet but doesn’t know your company. A RAG-powered AI is that same consultant, but now equipped with full, real-time access to your proprietary playbook, financial records, and SOPs.

- Without RAG: “What were our Q3 sales figures for the EMEA region?” → The model guesses or refuses, as it wasn’t trained on your private data.

- With RAG: The same query triggers a search of your internal CRM and sales databases, retrieves the correct figures, and instructs the model to synthesize an answer based solely on that data.

Key Insight for Leaders

Implementing RAG is not a technical “nice-to-have”; it is a non-negotiable foundation for trust and accuracy in business-critical applications. It transforms AI from a potential source of risk into a reliable conduit for your institutional knowledge.

We have eliminated RAG from our marketing and presentation. We talk about Knowledge Base, as it describes the business objective instead of the technology.

Capability & Automation: What is an AI Agent?

Understanding the engine, fuel, and quality control sets the stage for the ultimate goal: automation. This is where the promise of AI translates into tangible productivity gains and liberated human capital. The concepts of AI Agents, Workflow Agents and Autonomous Agents define the scope and sophistication of this automation.

Jargon Decoded: AI Agents

- AI Agent: A software program that uses AI models as its “brains” to perform a specific, multi-step task.

- Workflow Agents: follow pre-set instructions or rules to execute a single action or a simple sequence. Think of it as a digital specialist.

- Autonomous Agent: don’t follow a script; they improvise the process. Given a high-level objective, it can break it down into sub-tasks, decide on the sequence, use tools, and iterate until the goal is met. Think of it as a digital project manager or analyst.

Business Translation: The Hierarchy of Automation

This distinction is crucial for setting realistic expectations and prioritizing projects:

- Workflow Agents automate low added-value, repetitive tasks, data entry, basic content formatting, initial triage, scheduled reporting. They are your efficiency engines applied to known processes.

- Autonomous Agents tackle unknown, complex workflows that require interaction with multiple data sources, systems, and information format. They are your force multipliers for process innovation.

Key Insight for Leaders

The strategic deployment of Agents is about augmenting your team, not replacing it. It’s about redirecting human intelligence from routine execution to strategic oversight, creative problem-solving, and relationship management. The goal is to automate the process, not just the task.

Customization & Execution: Fine-Tuning vs. Inference

You have a clear goal, a chosen model, and a vision for automation. The final strategic fork in the road is customization: how much should you adapt the AI to your unique business? This is the domain of Training, Fine-Tuning, and Inference—terms that define the spectrum of effort, cost, and control in your AI deployment.

Jargon Decoded: Training, Fine-Tuning, Inference

- Training: The initial, monumental process of building a foundational AI model from scratch. This involves feeding a neural network vast datasets over weeks or months using immense computing power. This is the realm of tech giants like OpenAI or Google. For a business leader, think of this as funding the basic research to invent the internal combustion engine. You are not doing this.

- Fine-Tuning: The process of taking a pre-trained, general-purpose model and further training it on a smaller, specific dataset you provide. This “teaches” the model your unique domain language, style, and knowledge. It’s akin to taking that standard engine and recalibrating it for high-altitude performance or marine use. The model’s core architecture remains, but its outputs become specialized. You most likely don’t need that if you implement Knowledge Base efficiently.

- Inference: This is simply the act of using the model—asking it a question and getting an answer. Whether you’re using a raw foundational model or a finely-tuned one, the moment it generates text for you, it’s performing inference. This is driving the car.

Business Translation: The Strategic Investment Spectrum

The choice between using a model “out-of-the-box,” fine-tuning it, or exploring other methods is a critical cost-benefit analysis.

- Inference-Only (Off-the-Shelf): Fastest to deploy, lowest upfront cost. Best for general tasks, proof-of-concepts, or when combined with a strong integration.

- Fine-Tuning: Requires a curated dataset, technical expertise, and ongoing cost. Justified when you need the model to inherently “think” in your domain’s patterns across all its responses. It’s a tailored integration for core competitive functions.

- Knowledge Base as an Alternative: Often, providing the model with the right context at the moment of inference can achieve similar specificity to fine-tuning, with more flexibility and less upfront training. The choice is nuanced and strategic.

Key Insight for Leaders

The biggest mistake is assuming you must fine-tune to get value. For most established companies, the optimal, proven path is a hybrid approach: using powerful off-the-shelf models, grounding them in your data via Knowledge Base for accuracy, and only pursuing fine-tuning for a few, highly specialized, high-volume use cases where it delivers clear ROI.

Conclusion: From Confusion to Strategic Clarity

The journey through AI’s foundational jargon is not an academic exercise. It is the essential first step in transforming from a passive observer of the AI revolution into an active, empowered architect of your company’s future. Let’s reframe what we’ve decoded:

- AI Models are not just “AI” ; they are specialized engines. Your strategic task is selecting the right one for the job.

- Tokens are not just tech specs ; they are the measurable fuel and direct cost driver of your AI initiatives.

- Knowledge Base (RAG) is not just a technology ; it is the critical quality control system that grounds AI in your truth, turning a risk (hallucinations) into a reliability asset.

- AI Agents are not just chatbots ; they are your new digital workforce, capable of automating everything from simple tasks to complex, multi-step processes.

- Fine-Tuning is not always necessary ; it is a strategic lever on a spectrum of customization, where the business case must be clear and compelling.

Together, these concepts form the core components of a modern, AI-augmented operation: the Engine, the Fuel, the Quality Control, the Workforce, and the Customization process.

Your New Foundational Clarity

With this lexicon demystified, you are now equipped to move beyond vague aspirations. You can engage with vendors, data scientists, and your own teams with precision. You can ask the sharp, strategic questions that separate hype from substance:

- “Are you proposing a generic model, or one fine-tuned for our industry’s regulatory language?”

- “How many different AI models are available in the solution? How often do you integrate new models?”

- “How does your architecture implement RAG to ensure answers are grounded in our proprietary data?”

- “What is the expected token consumption for this workflow, and how does that translate to operational cost?”

- “Is this an autonomous agent, or a workflow automation?”

Ready to move beyond jargon and build a tailored, secure AI strategy for your established business?

Book a 30-minute expert review to identify the next step that will accelerate your AI Transformation.

We are Here to Empower

At System in Motion, we are on a mission to empower as many knowledge workers as possible. To start or continue your GenAI journey.

Let's start and accelerate your digitalization

One step at a time, we can start your AI journey today, by building the foundation of your future performance.

Book a Training